If it were trained on all the literature on seismology, statistics, and computer science, could an A.I. generate a passable review article titled ‘Big Data Seismology’? I have to admit, this was the question I asked myself when reading about the capability of the latest large language models in a recent New York Times article (https://www.nytimes.com/2022/04/15/magazine/ai-language.html). By being trained on only a word at a time, but using all our collective digital written content, these neural network models are somehow reaching apparently human-level feats of language. I’ve just finished the (human) exercise of writing an article by that title, with five (human) collaborators, without whom it would not have been possible. Our article is now published in Reviews of Geophysics: https://doi.org/10.1029/2021RG000769 . Part of our article explores the application of similar types of A.I. models for replacing humans in seismic data processing, so it doesn’t seem too far fetched to ask if the machines could also replace us in writing about seismology.

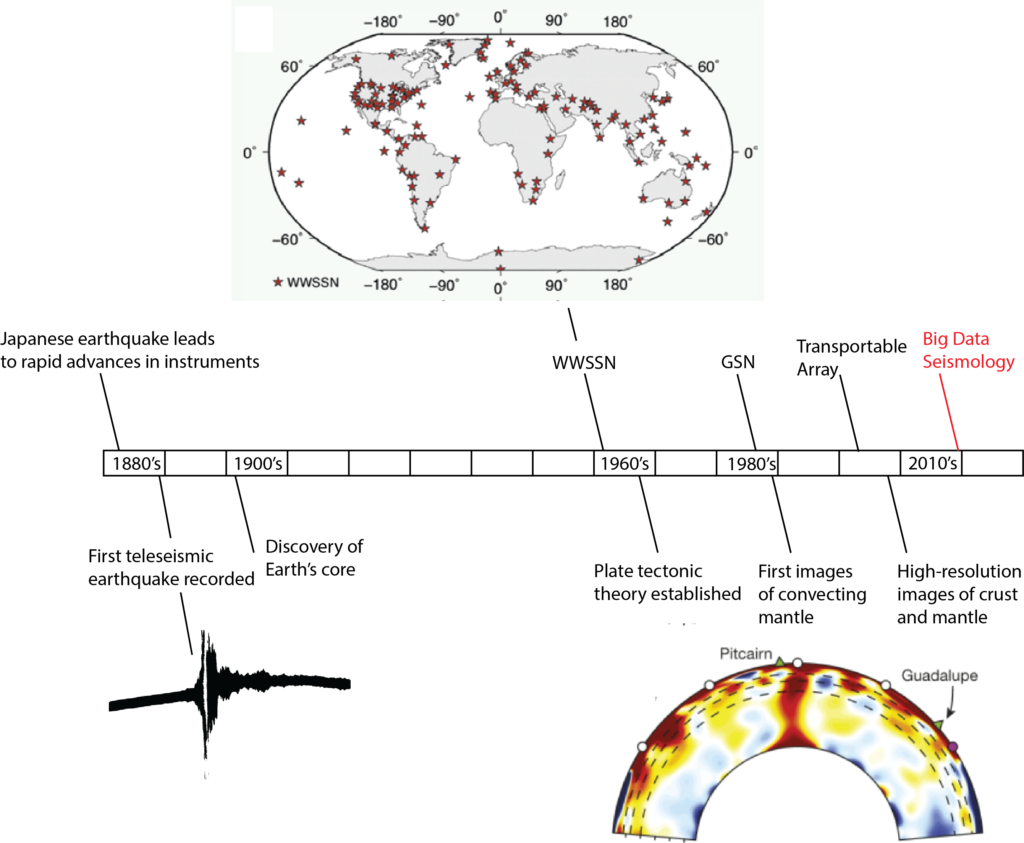

Seismology is a discipline that relies on recordings of ground motion (data) for humans to study earthquakes and the solid Earth. In this sense, seismology is an empirical discipline. The most significant discoveries in seismology have been enabled by data, interpreted by neurons firing in human brains (biological processes that invent these things we call ‘models’). Because of the centrality of data in this discovery process, I argue that the most significant innovations in seismology have followed innovations in data acquisition. This is presented in the timeline below.

The innovations in data acquisition since the 2010’s have been synchronous with innovations in processing and computing. We argue in ‘Big Data Seismology’ that a new type of seismological inquiry is now possible due to the confluence of advances in data, algorithms, and computing. We explore how Big Data Seismology is already enabling advances in earthquake processes, seismic imaging, and is opening up new avenues of seismological research. Finally, we discuss the opportunities and challenges associated with the big data revolution in seismology. Please check out the paper for more details on these issues!

A review article basically synthesizes the research in a field of study. Unlike most other scientific articles that present original research, review articles take a step back and summarize what a field has done, or where it is going. At present, this is done (by humans) by reading the literature and looking for themes that tie it together. It’s a lot of work, but very rewarding! If machines could do this just as effectively (or better) in future, would that mean we’ve reached human-type intelligence, or could the exercise be repeated by learning one word at a time? I’m not sure myself, but I like to hope that writing a good review article requires more than learning in this way.